Whitepaper · Version 1.2

Tax Ready Bookkeeping™ + The AI Stack

A Four-Stage Data-Flow Whitepaper

How a small business turns raw financial transactions into decisions a fractional CFO can act on — and the discipline that makes the AI underneath it trustworthy.

Where this fits

This is the prospect-facing whitepaper for Tax Ready Bookkeeping™ and the AI Stack that runs underneath it.

Our advice

Read The Two Perspectives first.

Before this whitepaper will land the way it’s meant to, your business needs to be ready to extract value from AI — and “ready” has a specific meaning. The Two Perspectives names the two disciplines — knowledge governance and operational data integration — that determine whether AI produces operating intelligence or expensive theater. Owners who skip it and start here often hear features like “industry-aware classifier” as things to evaluate; owners who read it first hear them as the specific tooling answers to a question they have already framed for themselves.

Reading order

- The Two Perspectives — the AI-readiness diagnostic. ~10 minutes.

- Tax Ready Bookkeeping + The AI Stack — Whitepaper v1.2 — the bookkeeping-specific application (this page).

- ProjectBits Thought-OS™ — the full methodology umbrella.

Where the methodology comes from

Nothing here was invented from scratch. The maturity-model side draws on two anchors that sit alongside the five-lens diagnostic: the Financial Maturity Staircase is an application of Carnegie Mellon Software Engineering Institute’s Capability Maturity Model to financial operations — the same five-level structure SEI introduced for software engineering, recast for the disciplines that turn transactions into decisions — and knowledge governance draws on records-management discipline (ISO 15489 — the international standard’s four characteristics: authentic, reliable, integrity-bearing, useable).

The five analytical lenses come from a small set of thinkers whose work has aged unusually well: structure from Geary Rummler and Alan Brache (process / job / organization levels, “the white space on the organization chart”); cognition from Gary Klein and Daniel Kahneman (recognition-primed decision-making, System-1 / System-2 thinking); development from Josh Waitzkin (The Art of Learning, deliberate practice); variation from W. Edwards Deming (common-cause vs special-cause variation, plan-do-check-act); and incentives from agency theory (Jensen and Meckling). Two operator-side frameworks complete the set: Peter Drucker on effectiveness and Larry Bossidy and Ram Charan on execution. Diagnostic discipline draws on Philip Tetlock’s calibration research.

What ProjectBits adds is the integration: the financial-data application, the AI-stack architecture, the engagement playbook, and the receipts of having operated it on ourselves.

The grist mill — one image to carry through

The financial data flow is the grist mill. AI is the miller’s apprentice. People are the designers and operators. And flour is not the goal — bread is.

Raw transactions come in like grain. The four-stage flow — sovereign, accurate, leverageable, informing decisions — is the milling. The classifier, the policy engine, the reconciliation, the audit log, the retrieval layer, the agent runtime: each is part of the mill. The output of the mill is flour — clean, defensible, leverageable financial data the CFO and the owner can trust. Flour is necessary, but flour is not the outcome.

Flour gets carried over to the baker — to the CFO Operating System — where it becomes the actual things owners hire a CFO to deliver: a pricing call you can defend, a customer fired with the numbers to back it, a growth bet sized against a 13-week cash forecast, a payroll decision against utilization actuals, a year-end conversation that opens with strategy instead of cleanup. That is the bread, the cake, the cookies. Take the mill away and AI has nothing to grind. Take the baker away and flour piles up in the pantry.

From Messy Data to Predictive Growth — the four-stage Financial Maturity Staircase, the grist mill, and the CFO Operating System in one frame.

Many “AI bookkeeping” products ship you the oven and forget the mill. They categorize what’s already in QBO, hallucinate when they don’t know or understand, and produce little the operator can defend at year-end. Tax Ready Bookkeeping runs the mill. The AI Stack is what makes the mill worth running. The next book — The CFO Operating System — is the baker, and the bread is the decisions that grow the business.

The Financial Grist Mill — grain to flour to bread, with the baker explicit.

How the mill was built — and how it gets better

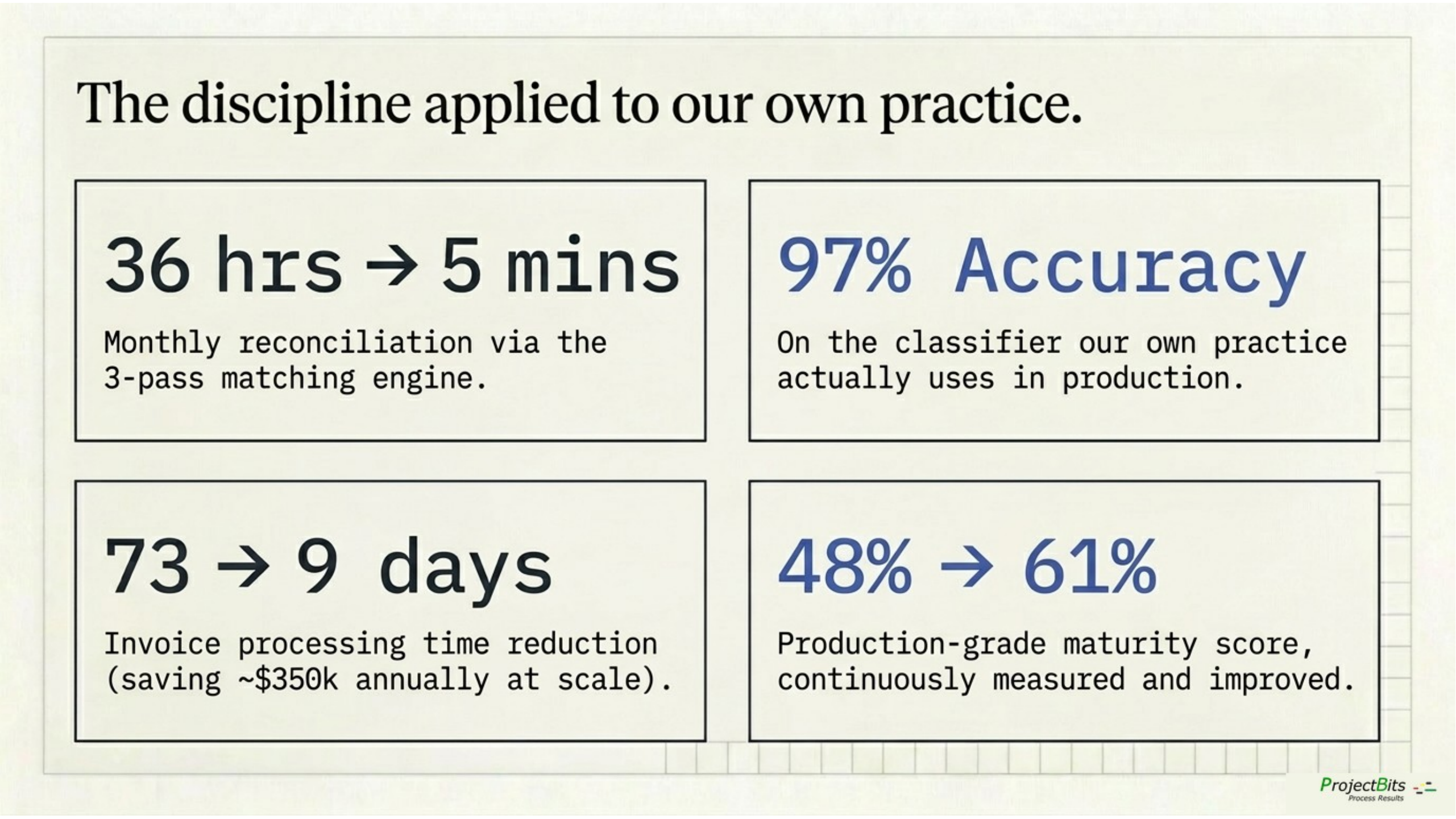

We built it through intentional design — every component evaluated against named alternatives, every choice tied to a business outcome, every trade-off acknowledged out loud. And we refine it through iterative real-world improvement — the classifier got to 97% by being measured and re-tested; reconciliation got from 36 hours to under 5 minutes by being used and adjusted; the 9-category benchmark is scored monthly because monthly is what catches drift.

The design wasn’t theoretical; it was the starting point. Every engagement teaches us something the design didn’t anticipate, and the mill takes those lessons and improves. That’s the difference between a system that was built once and one that keeps learning and improving how it is working.

We hold ourselves to the same discipline we deliver. The infrastructure that runs the mill is benchmarked against a public 7-layer production-grade agentic-AI framework — the same kind of structured maturity model we apply to your books. As of March 2026 it scores 61% production-grade overall, up from 48% the prior assessment. The 13-point lift came from two specific deployments (LLM observability via LangFuse, load testing via k6) — measured the gap, shipped the fix, re-scored. We don’t ask clients to trust a system we wouldn’t grade ourselves on; the score moves because we measure it.

The four-stage thesis

Every small business with QuickBooks captures and records financial data. Almost none of it is leverageable — it sits in QBO as unreviewed or posted records, not signals. The path from records to decisions runs through four stages, each a prerequisite for the next.

| # | Stage | What it means | What fails without it | The buyer test |

|---|---|---|---|---|

| 1 | Sovereign | You control your data and your processing. Your perimeter, your audit trail, portable on demand. Sovereignty is a contractual and architectural property — not a question of where the box lives. | Your AI vendor’s pricing, policies, or availability dictates your finance function. | “If my AI vendor disappears tomorrow, what do I lose?” |

| 2 | Accurate | Reconciled weekly, dimensionally classified (Class / Location / Project), documents linked, 9-category benchmark scored monthly. | AI on dirty data is worse than no AI. Year-end is archaeology. | “If I closed the books today, would the numbers tell the truth?” |

| 3 | Leverageable | Industry-aware classifier suggests categories; reconciliation engine matches accounts in three passes; policy engine evaluates every transaction against your written rules; audit log captures every AI suggestion and every human override. | AI is a parlor trick — interesting demos, no operational work, no conviction at year-end. | “Do my books produce insight, or just records?” |

| 4 | Informing decisions | A six-category operating scorecard — Cash · Growth · Delivery · Profitability · Process · Recommendations — refreshed weekly. 13-week cash forecast, scenario models, customer-profitability ranking, industry-benchmarked KPIs. Fractional CFO arrives to data they can trust. | Advisory engagements stall in cleanup. You find things out from the books instead of running the business with them. | “Are we making decisions from the books, or finding them out from them?” |

Most small businesses are stuck between Sovereign and Accurate. Some reach Leverageable but the data is too noisy for AI to add value. Almost none reach Informing decisions — because their books were never built for it.

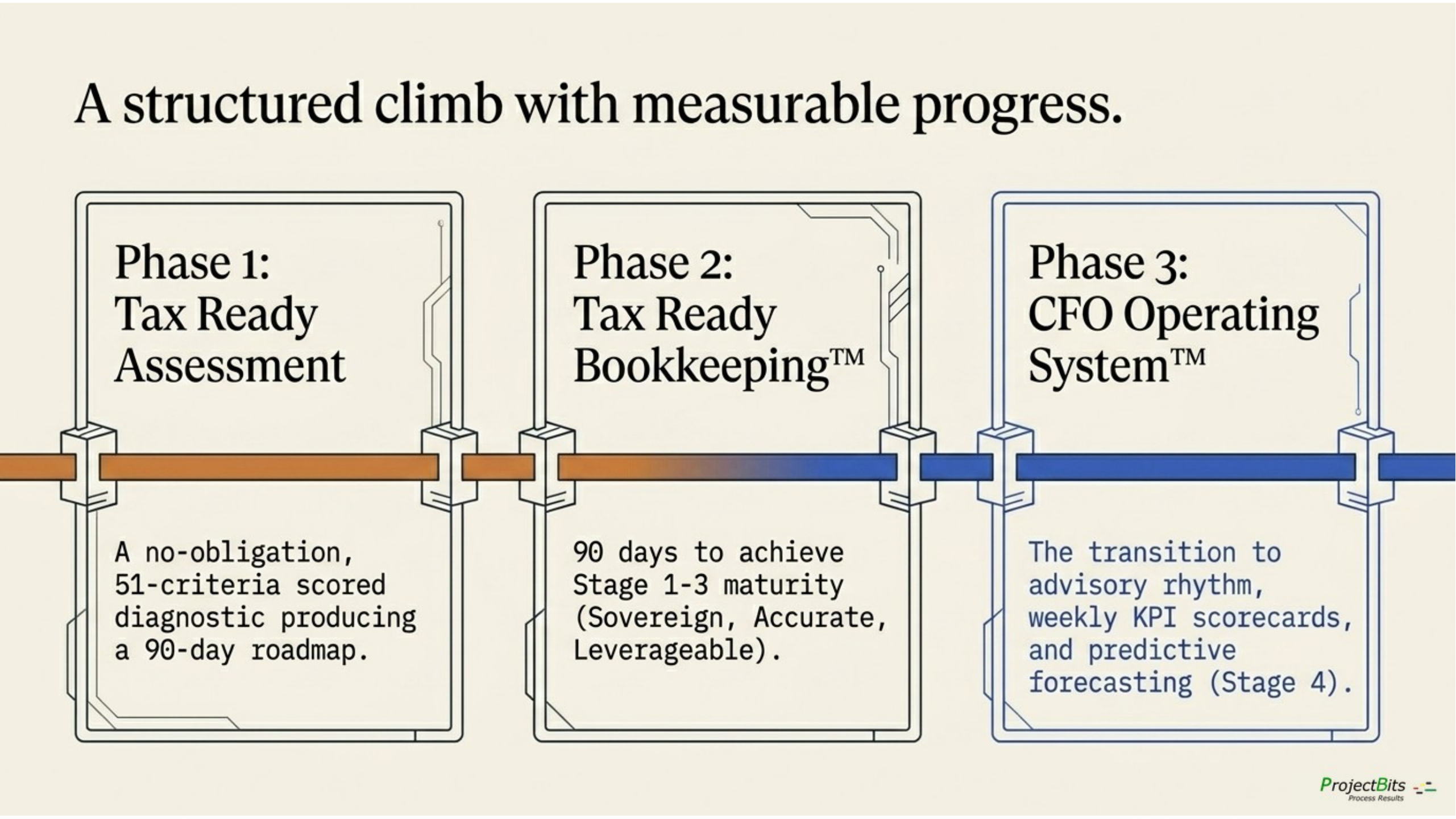

Tax Ready Bookkeeping is the practice that gets the data to Stage 2. The AI Stack is what unlocks Stage 3. The combination is what makes Stage 4 — a traditional or fractional CFO who advises rather than cleans — possible at all.

Why AI alone fails — and what fixes it

Most small-business owners hearing “AI in the books” picture a black box that categorizes transactions. That product exists. It is only a part of what we provide, and it is not why customers should care.

Three failure modes when AI runs the books alone

- Hallucination on numbers or events it doesn’t know or fully understand. Generic AI categorizes “Microsoft 365” as “software” and stops. It doesn’t know that an MSP has tiered SaaS — Datto, ConnectWise, M365, Webroot — that need to land in different accounts to make customer profitability legible.

- No memory across time. A categorization that was right in March drifts in October because the AI doesn’t remember what your team bookkeeper decided. By year-end the same vendor lives in three accounts.

- No audit trail. When DCAA, your tax preparer, or your CPA asks “why is this here?” — and you can’t reconstruct what the AI did six months ago — you have an audit problem. This is non-negotiable for anyone with compliance weight requirements, most of us.

What fixes it — four operations running together, grounded in curated retrieval

| Operation | Built today | What it adds |

|---|---|---|

| Categorize | Embedding + kNN classifier (mxbai-embed-large, k=3), benchmarked at 97% accuracy on the categories your business actually uses. Industry-tuned. | The AI suggests against your 12-month coding history, not its training memory. |

| Reconcile | qbo-reconciliation engine — three-pass matching (exact, date-tolerant, fuzzy) across bank ↔ QBO ↔ payment processor. | “From 36 hours to under 5 minutes” is a measurement, not a marketing claim. |

| Evaluate | OPA / Rego — 10 production policies (FAR / DCAA, expenses, capitalization, month-end, vendor approval). Demonstrated live in the Policy Compiler video. | Your written rules run automatically on every transaction, before anything posts. |

| Audit | Decision log — every AI suggestion, every human override, every policy verdict, queryable by date / account / vendor. | Reconstruct any decision retroactively. DCAA-grade. |

The four operations of Stage 3 — running together produces insight, not records.

Run those four over time, and a fifth thing emerges: insight. Not “AI being clever.” Patterns. The classifier disagrees with current coding 6% of the time, and the disagreements cluster around two vendors. Reconciliation is fresh on 23 of 24 accounts; one account has been stale for 14 days. Per-diem rule fired 11 times this month vs a 2–3 baseline. Telecom spend is 3× the MSP-peer median.

Those findings are what a CFO opens first. They emerge from the operations running, not from a separate “anomaly detection” product.

AI for interpretation, code for structured and repeatable execution, humans for the calls the policy says require a human.

The model proposes; the predefined process evaluates and produces findings and recommendations; the policy decides whether the entry auto-posts (low-risk, matching the rules) or routes to a human review queue (anything outside the rules). By design, your written policy is the authoritative source of truth for what posts how — not the vendor’s defaults, not the model’s confidence, not anyone’s hunch.

Industry-aware by design

Generic AI bookkeeping treats every business the same. Tax Ready Bookkeeping doesn’t. The data flows and integration, chart of accounts, classifier training, policy parameterization, and KPI benchmarks are configured for your vertical at onboarding. The vertical strategy lives in three tiers.

Professional Services on QBO — primary

The umbrella that covers consulting practices, advisory firms, fractional CFO / CTO shops, agencies, boutique professional firms, and MSPs as a tech-services flavor of professional services. The three questions are the same; the operational stack underneath them differs.

The three questions that define a professional services firm’s books:

- Where is time going? — Time-tracking or PSA tickets → billable units per engagement per client

- What does each engagement cost? — Loaded labor + engagement expenses + non-billable overhead allocation

- Which clients actually make money? — Engagement revenue − billable cost − non-billable hours − overhead = client profitability

Consulting / advisory / fractional / agency

| Anomaly the system surfaces | Why it matters |

|---|---|

| “Project Larson Industries: 64% billable, 36% non-billable. Your project-typical baseline is 78 / 22. Margin leakage worth a conversation.” | Fixed-fee project drifting from estimate; PM needs to reset scope or rate. |

| “Retainer Greenfield Co — 40 hours each of last 4 months. This month: 12 hours. Client gone quiet, or hours not entered.” | Retention risk surfaced before invoicing. |

| “Subcontractor labor coded to 5400 (Cost of Services) but missing 1099 vendor flag. 12 transactions in May.” | 1099 obligation visible in real time, not next January. |

MSP / IT services — sub-flavor, PSA-driven

| Anomaly the system surfaces | Why it matters |

|---|---|

| “Customer Acme: MRR $2,400, but tickets-to-resolve hit 47 hours this month — 3× your portfolio average. Customer profitability: −$180. They’re costing you money.” | The conversation about firing or repricing this customer starts with a number, not a hunch. |

| “Tooling allocation for Datto bumped 18% across 3 customers; one of them isn’t on the plan you upgraded. Allocation drift.” | Margin leakage caught the month it happens, not at year-end. |

| “Verizon Wireless: $4,200 — 3.0× MSP-typical telecom spend.” | Right account, wrong size. Plan changed and nobody updated the budget. |

Trades / Field Service on FSM + QBO — secondary

Service businesses running ServiceTitan, Housecall Pro, Jobber, or similar. Crew-based, owner-operator or small GM team. High job volume, $1M–$10M revenue. Busy and broke at the same time. Job costing lives in a spreadsheet or doesn’t exist; change orders disappear into the books; bonding and lending conversations are painful because the financials don’t hold up.

The system surfaces job-by-job profitability the same way it surfaces engagement profitability for professional services — different operational source (FSM not PSA), same architectural pattern.

Financial Services and Real Estate — tail

Small wealth-management firms, RIAs, fractional CFO practices serving non-VC-backed firms, and real-estate operations bookkeeping — property managers and holding companies in operations mode (not 1031 / cost-seg specialty). Compliance pressure higher than peers; books need to stand up to a regulator, lender, or partner-in-residence at any moment.

The system surfaces the same three questions adapted to engagement type — AUM-fee revenue per client, advisor labor per client, compliance-overhead allocation; or property revenue, operating expenses, asset-level depreciation tracking.

Cross-vertical — the policy-stage signal

The fit signal that runs through all three tiers, independent of vertical: the owner has written operational policies as the team grew — travel limits, expense thresholds, approval rules, billing standards — and is starting to wonder if those policies are being followed. The Tax Ready Assessment locates the firm on the Financial Maturity Staircase; Tax Ready Bookkeeping is the service that turns those written policies into rules every transaction is evaluated against. Past just-ask-me, into write-it-down, looking for enforce-it-mechanically.

The same architecture serves all three tiers — different account templates, different classifier patterns, different policy parameters. The system “knows the difference.”

Why niche focus is the right strategy

A generalist bookkeeper learning a new vertical has to rebuild context — the chart of accounts, the operational system on the other side of the integration, the policy questions an owner in that industry actually asks, the benchmarks that mean something to them. A specialist starts with that context already built. The conversation with the owner sounds different from the first call: “your tooling spend on Datto across these three customers is drifting, here’s what that usually means” lands differently than “your software expense looks high, you might want to look at it.” Niche focus is what turns a bookkeeping engagement into an advisory relationship — and the outcome an owner remembers is the relationship, not the close.

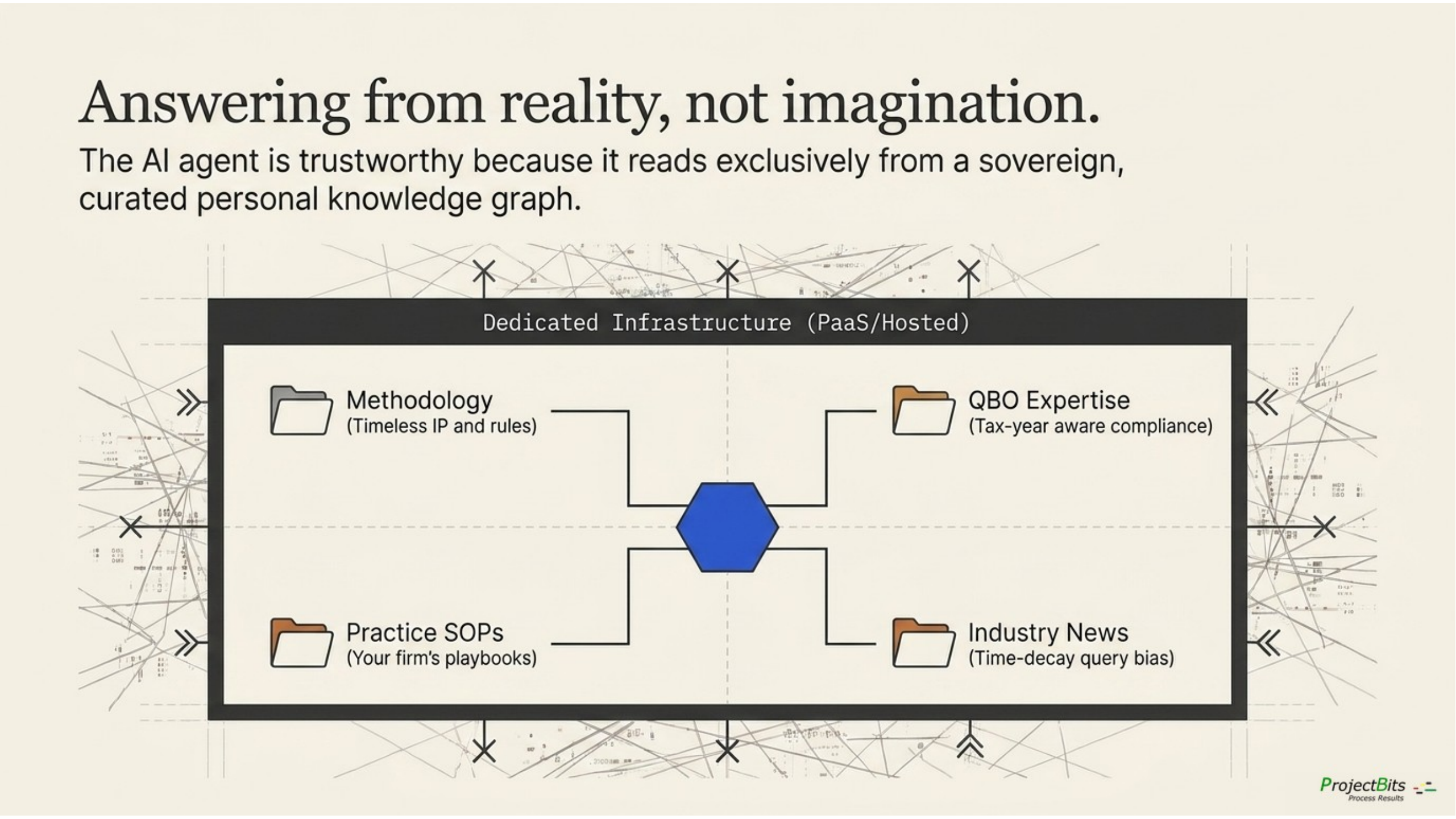

The curated retrieval layer that knows your business

The reason the AI can be trusted with your books is that it doesn’t answer from training memory. It answers from a curated retrieval layer that you and ProjectBits control — your data, your processing, your audit trail, sovereign by design.

Control and sovereignty are the principle. Deployment is the menu.

“Dedicated infrastructure” means dedicated to your engagement — not pooled with anyone else’s, not training anyone’s model — and there are several legitimate ways to deliver it, each with the same control guarantee:

| Deployment mode | What it looks like | When it fits |

|---|---|---|

| Platform-as-a-Service (PaaS) tenancy | ChromaDB, the agent runtime, and the workflow rail running in a dedicated tenancy on AWS / Azure / GCP / similar — contractually isolated, your data, your encryption keys where supported. | The dominant modern mode. Right when you want the operational economics of the cloud without pooled-tenant exposure. |

| Private cloud you own | Same stack running in your own cloud account, billed to you, administered jointly. | Right when your security or compliance team requires the perimeter to be inside your billing relationship. |

| On-premises | Same stack running on your hardware, in your data center. | Right when regulatory or contractual obligations require physical custody. |

| ProjectBits-hosted | Same stack on infrastructure ProjectBits operates, with the same contractual isolation as a SaaS arrangement that names your data is never used to train any model. | Right when you want the simplicity of a managed service and the control of a dedicated tenant. |

| Mixed | The stack split across modes — for example, the agent runtime in your PaaS tenancy, the retrieval layer ProjectBits-hosted, the workflow rail on your premises. | Most engagements end up here. The architecture supports it by design. |

The retrieval layer — four curated collections feeding the agent layer.

The word that ties them together is dedicated to your engagement. ChromaDB is the retrieval engine in every mode; what changes is whose perimeter it runs in. Curation — the editorial work of canonical-source designation, supersession, time-decay weighting — is the same work regardless. Curation is what makes retrieval honest. Control and sovereignty are what make it yours.

To make this concrete — here’s what runs in our lab

Our practice runs the ProjectBits-hosted deployment mode on our own infrastructure, because we are the practitioner-customer of our own architecture and we use the lab as both a working environment and a reference implementation. The retrieval layer is ChromaDB on a dedicated RAG host (one box, one purpose). The agent runtime is OpenClaw on a small Kubernetes cluster. The workflow rail is n8n, also on Kubernetes. Local LLM inference runs on a Tesla P40 for sensitive workloads; frontier models (Claude, Gemini, OpenAI, Perplexity) are reached over named API contracts with no-training clauses. PostgreSQL holds the session history and structured memory. Everything is wrapped in Cloudflare Zero Trust at the perimeter and benchmarked monthly against a public 7-layer production-grade framework. The 61% score we cite earlier in this whitepaper is this lab’s score. When you ask “how does this actually look in production?” — the answer is: it looks like our lab, sized for your business, deployed in the mode that fits your control requirements.

What’s actually in the retrieval layer

Existing — the personal knowledge graph (152,609 chunks across 8 collections). Books read, research sessions, methodology development conversations, AI agent runs, curated bookmarks. This is the substrate — it answers methodology and practice questions cleanly. Personal knowledge, biographical in shape.

Under active build — four topical collections that ground operational AI:

| Collection | What it holds | Time-aware? | Why it matters |

|---|---|---|---|

| projectbits_methodology | The 9-category benchmark, Financial Maturity Staircase, policy-to-workflow rules, audit-trail design, brand voice. ProjectBits IP. | No — timeless | The AI answers methodology questions in your methodology, not generic bookkeeping. |

| qbo_expertise | IRS publications (535, 583, 463), ProAdvisor practice notes, QBO API reference, vertical-specific bookkeeping research. Authority-tagged. | Tax-year-tagged for items that decay | Conflicts between official sources and community noise resolve toward authority. Tax-year-aware questions get tax-year-aware answers. |

| projectbits_practice | SOPs, runbooks, sales process, engagement letters, onboarding checklists, pricing tiers, anonymized lessons learned. | No | The bookkeeper’s question gets a ProjectBits answer, not a generic one. |

| industry_news | IRS news releases, Intuit announcements, MSP / vertical trade press, EOS publications, regulatory changes. | Yes — time-decay query bias | This week’s IRS Rev. Proc. surfaces in this week’s bookkeeping decisions. Old guidance gets demoted. |

The four collections close the loop on Stage 3. They’re the difference between “AI categorized your expenses” and “the IRS issued a per-diem update last week and your books haven’t caught up — here are the two transactions that need re-evaluation.”

Where humans interact with the system

Humans interact with the system across several surfaces — not one. We chose each surface for what it does well, evaluated against the specific interaction it serves. The discipline matters: we’re not collecting tools, and we’re not pretending one platform answers every need. We incorporate a tool where its strengths line up with a business outcome, and we accept its trade-offs explicitly.

| Interaction | Right surface | Why we chose it |

|---|---|---|

| Daily ledger work, customer-facing reports, system-of-record | QuickBooks Online | What your CPA expects, what your auditor reads, what AP / AR runs against. We don’t replace it; we make it more reliable. |

| Where the work actually happens — tickets, time, jobs, projects, properties, units | Your industry-specific operational system — PSA for MSPs (ConnectWise / HaloPSA / SuperOps / Autotask); time tracking for consulting (Toggl / Harvest); FSM for trades (ServiceTitan / Housecall Pro); property management for real estate (Buildium / AppFolio) — with bidirectional integration into the data flow | This is where billable activity originates — long before it shows up in QBO. Without this surface, the system only knows what was invoiced; with it, the system knows what was worked — and customer profitability, utilization, and job-cost variance become real numbers the bookkeeper and CFO can defend. |

| Recurring workflows, scheduled jobs, multi-step orchestration | n8n | Deterministic, retriable, auditable. Every step logs to a queue you can rerun. The deterministic rail — and the bridge between QBO, the operational systems, the AI stack, and the dashboards. |

| Conversational review, exception handling, advisory prep, judgment-heavy interactions | OpenClaw (self-hosted agent runtime) | Per-client agent isolation, packaged specialist behaviors, paired-device input for receipt capture inside your perimeter, overnight pattern consolidation that produces a narrative briefing of what’s worth a human’s time. |

| KPI snapshots, scorecard trends, dashboard views | Web dashboard / Grafana | Quick visual answers for “where are we?” — without opening a conversation. |

| Approvals on the go | Email / Teams / Slack | Approvals happen where the bookkeeper and owner already are. The system meets them there; it doesn’t make them come to a new app. |

Why OpenClaw specifically — and what we accepted in return

OpenClaw earns its place in the stack because four of its capabilities directly serve outcomes nothing else delivers as cleanly. We named the trade-offs going in.

The capabilities that fit the work

- A dedicated agent per client. Each engagement runs its own agent with isolated memory, persona, and workspace. Sovereignty is enforced by the runtime, not by promises. Your agent doesn’t learn from another client’s books.

- Packaged specialist behaviors. Categorize this expense, build the weekly scorecard, flag anomalies for close, generate the policy violation report. Consistent results across sessions.

- Paired-device input. Phone-camera receipt capture, screen capture for QBO observation, image processing — handled inside your perimeter. The transaction journey can start with a photo without sending it to a third-party cloud OCR pipeline.

- Overnight pattern consolidation. Background memory processing in three phases modeled on human sleep cycles. While your bookkeeper sleeps, the agent stages recent signals, promotes durable patterns to long-term memory, and extracts themes — producing a narrative diary of what’s worth a conversation.

Trade-offs we accepted

- Activation curve. Overnight consolidation is opt-in and disabled by default; activating it is service-rollout work. Worth it for the fractional CFO use case; we’re not pretending it’s free.

- Integration footprint. n8n has a wider pre-built integration library. OpenClaw shines on conversational and judgment work but isn’t a substitute for n8n’s deterministic orchestration. We use both, deliberately.

- Operational overhead. It’s another platform to monitor, back up, and version. We accepted that because the per-client agent model and overnight diary capability aren’t available elsewhere — and the outcome they unlock (advisory-ready data on Monday morning) justifies the cost.

The discipline: every component — OpenClaw, n8n, ChromaDB, OPA, the classifier, the reconciliation engine — earns its place because of a measurable business outcome. Tools that don’t deliver outcomes get cut. We evaluate; we don’t collect.

Five questions we hear owners actually ask

The v1.1 whitepaper opened this section with “Five Finance Fears” — defensive framing about what could go wrong. Owners ask different questions. The questions worth answering are about elevation, not protection. Here are the five.

| Question | Honest answer |

|---|---|

| Will this raise my game — give me a clearer view of my financial position and help me make better decisions? | Yes. That’s the entire point. Books that close on time. Reconciliations that match. Categorizations that hold up across the year. KPIs that mean something for your industry. You go from finding things out from your books to running the business with them. |

| Should I learn some of this myself, or is it all my bookkeeper’s job? | Learn some. Not necessarily the technical layer if not your interest — the AI’s behavior, your policies, what your benchmarks mean for your industry. ProjectBits is your sherpa for the climb; you still need to prepare for the ascent. The owners who get the most out of the system are the ones who understand what it can answer and shape it toward the questions their business actually faces. |

| What if the AI is wrong? | Posting takes place per the policy you write. Routine, low-risk transactions matching your rules can auto-post; anything outside those rules routes to a human review queue, by design. If a suggestion is wrong, it’s wrong in the queue you review — not in your books. Your policy decides where the line is, not the vendor. |

| How do I know I’m getting real value, not just hype? | Two scorecards, both visible. Yours: the 9-category benchmark scored monthly — you watch the number move. Ours: the infrastructure your engagement runs on is itself scored against a 7-layer production-grade framework (currently 61%, up from 48%). We hold ourselves to the same kind of public maturity scorecard we hold your books to. If the signals don’t show up, the engagement isn’t working — and we’ll tell you that, not bill you for another quarter. |

| What does success look like 90 days in? | Three concrete signals. The benchmark score has moved. The system has surfaced findings that prompted real conversations — about a customer’s profitability, a vendor pattern, a policy gap. By month four, the conversation with your fractional CFO is about strategy, not data cleanup. That’s the bridge from Stage 3 to Stage 4. |

The technical analogies and the deeper sovereignty narrative live in the appendix where CTOs and CFOs read them.

Receipts — the discipline applied to the practice

The disciplines this paper describes are not theoretical for ProjectBits. They are the practice we run on the practice.

The marketing strategy, methodology library, session history, client correspondence, and reference material that drive day-to-day decisions live in a curated retrieval system we built and maintain — the same architecture pattern this paper recommends, sized for a small consulting practice. Concretely, what that looks like today (mid-May 2026) — published as a living, searchable documentation site via MkDocs alongside the underlying retrieval store:

A canonical-source-designated methodology corpus

Canonical source is the records-management term for the one document on a topic that is the authoritative master version — every other document on the same topic is explicitly marked as superseded. Six governing documents fit that test here — positioning, methodology, onboarding playbook, voice, brand kit, and the umbrella methodology page — each with explicit status (canonical / living / locked), supersession metadata, and review cadence. Older PDF versions of the cornerstone marketing document are explicitly marked as superseded and excluded from retrieval. Multiple sources of truth produce contradictory answers; we eliminated ours.

A multi-format intake pipeline feeding ten retrieval collections

Markdown, PDFs, EPUBs (technical books), meeting transcripts, web articles, AI-conversation exports, and bookmarked external material flow into a vector store carrying roughly 195,000 indexed chunks. Working sessions, conversation history, ingested books, industry news with time-decay weighting, and curated external reference material each get their own collection.

A session-history archive that compounds

Every working session is captured to a PostgreSQL log with topic, summary, and keyword indexes; 304 sessions logged to date, transcripts ingested with provenance metadata. New work begins by querying prior work. The point at which this stopped being a backup system and started being institutional memory was the point at which retrieval became faster than the colleague-asking it replaced.

A live entity-resolution layer

Contacts in the CRM, leads from outreach platforms, meeting participants, and email senders are reconciled to single canonical identities — the SMB-appropriate version of master data management, not an enterprise MDM rollout. A cross-system query for “everything we have on this person or company” returns one answer, not three.

A canonical service registry for the practice’s own infrastructure

What runs where, with what credentials, on what health endpoint, is stored in one queryable place — applied to the firm’s own operations, not just client engagements. The discipline doesn’t stop at the books.

What’s still incomplete — and we say so

The question-capture loop we designed for surfacing, scoring, and feeding back the questions that drive corpus value exists as an architecture specification. Meeting-transcript intake is live; the canonicalization, scoring, and gap-tracking layers are design specs, not running code. We are working through them the same way we would work a client through them — sequenced, measured, no leaping ahead. The retrieval store carries decades of accumulated correspondence and reference material, but the formal audit — canonical-source designation and metadata schema applied retroactively to legacy content — is an ongoing multi-month effort. The audit doesn’t get done; it gets maintained.

The architecture in this paper isn’t a sales pitch built from a whiteboard. It is the working specification of the system we are using to run our practice.

Four measured outcomes from our own practice — the discipline applied to ourselves first.

Anything we recommend, we have been operating against ourselves — long enough to know which parts hurt and where the corners get cut under deadline pressure. When we walk a client through the assessment, the diagnostic, or the maturity ladder, we are walking them through territory we have already crossed.

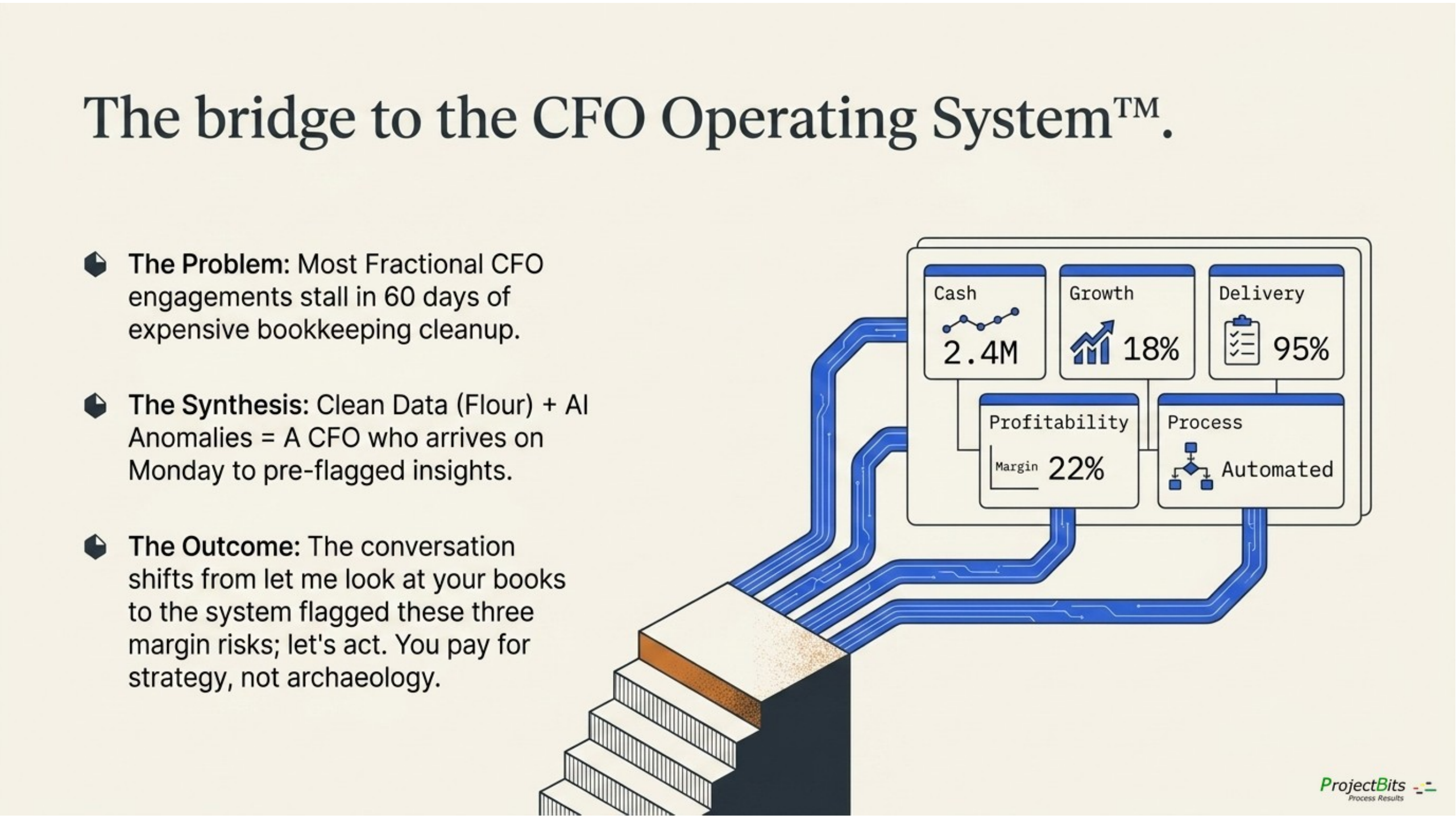

What this unlocks — the bridge to the CFO Operating System

Most fractional CFO engagements stall in the first 60 days. The CFO arrives, looks at the books, and finds out they’re three months behind. Reconciliations are stale. Categorizations are inconsistent. Policies live in a binder. The first 30 days become cleanup. The client’s reaction: “I hoped for more value.”

The Tax Ready + AI Stack combination changes the first month entirely. The CFO arrives to a system that is already starting to produce its own findings and opportunities:

- Industry-benchmarked KPIs — not “your expenses are up” but “your tooling spend is 18% above MSP-peer median, driven by these three vendors.”

- Classifier-flagged disagreements — 14 transactions where the categorization pattern says one thing and the current coding says another. Each one a specific, reviewable item.

- Reconciliation freshness map — which feeds are reliable, which need attention, which have been stale long enough to hide problems.

- Policy fire trends — per-diem rule fired 11 times in May vs 2–3 baseline. Travel pattern shifted; worth a structural conversation, not a one-off cleanup.

- Customer profitability ranking — which clients actually make money, with the numbers to defend the call.

The CFO’s first conversation with the owner isn’t “let me look at your books.” It’s “the system flagged these three things — let’s start with which one matters most to your business this quarter.”

The economics, anchored in market data

Pilot reports the open-market range for fractional CFO services as $3,000 to $12,000 per month, with $5,000 to $8,000 as the typical early- to mid-stage average; Anders’ average virtual CFO engagement runs $78,000 per year. Higher costs are explicitly attributed to messy books, frequent support, and manual systems — i.e., to time the CFO has to spend on cleanup instead of advisory. Dark Horse anchors the entry point with a paid CFO Assessment starting at $5,000 that produces a CFO Roadmap. The combination of clean Stage 3 data plus a productized advisory layer makes a $7K–$10K / month engagement defensible because the CFO is doing $7K–$10K of advisory — not $3K of advisory and $5K of cleanup. The buyer is paying for the work the system can’t do, not for the work the system already did.

The named offer — ProjectBits CFO Operating System

The next book’s spine is a four-package productized service — provisionally ProjectBits CFO Operating System — built for consulting and professional service firms ($2M+ revenue is the market-typical entry threshold per Anders / FocusCFO). The four packages mirror the chapter structure:

| Package | What it is | Cadence | Benchmark anchor |

|---|---|---|---|

| 1. Operating System Diagnostic | Paid entry-point engagement: Operating System Readiness Score, financial data quality score, KPI dictionary, CFO dashboard blueprint, core process gap list, 90-day implementation roadmap. | One-time project. | Dark Horse CFO Assessment ($5K+); Cherry Bekaert modernization framing. |

| 2. CFO Control Tower | Recurring fixed-fee subscription: monthly close + management reporting, weekly KPI scorecard refresh, cash forecast, project / client profitability, utilization / realization, pipeline-to-capacity, monthly CFO operating review. | Weekly + monthly. | Pilot $5K–$8K typical; Lucrum $1,599 SMB anchor; The CFO Project’s productized model. |

| 3. Finance Process Buildout | Project-based implementation accelerators: QuickBooks cleanup, CoA redesign for professional services, PSA-to-QBO workflow, time-and-billing redesign, WIP / AR automation, dashboard buildout. Scoped separately from advisory so the CFO subscription doesn’t become unlimited systems work. | Project sprint. | Cherry Bekaert CRM → PSA → ERP → BI flow framing. |

| 4. Quarterly Growth & Traction Review | Add-on for firms running EOS or self-implementing a BOS: convert Rocks to financial measurables, capacity / cash / budget impact analysis, financial accountability for priorities. | Quarterly. | EOS Worldwide’s framework — ProjectBits is EOS-compatible without claiming to be a Professional EOS Implementer. |

The bridge to the CFO Operating System — the scorecard arrives ready.

This whitepaper describes the mill. The next book is the baker. The bread is the decisions that grow the business.

Appendix — The 8-Tier Stack as proof

The 8 Tiers are real. They earn their place by delivering the four guarantees behind the four-stage thesis: sovereignty, auditability, model independence, and operational reliability. Each tier was chosen against named alternatives, with the trade-offs evaluated.

| Tier | What it is | Stage / guarantee | Why this and not the alternative |

|---|---|---|---|

| 1 | LLM — Claude / Sonnet, Gemini, Perplexity, OpenAI cloud + Ollama local on Tesla P40 | Stage 3 / Model independence | Alternative considered: single-vendor cloud. Single-vendor lock-in risks pricing, policy, and availability shocks. Bidirectional fallback (cloud ↔ local) eliminates the single point of failure and lets us match workload to model. |

| 2 | Context — finite per-call window, smart retrieval, redaction at the boundary | Stage 3 / Operational reliability | Alternative considered: stuff everything into the prompt. That works in demos; production breaks on cost and signal-to-noise. A bounded context with smart retrieval is what makes the RAG layer worth running. |

| 3 | RAG — ChromaDB on dedicated RAG host with curated topical collections + personal knowledge graph | Stage 3 / Sovereignty + accuracy | Alternatives considered: pgvector inside the main DB; no RAG. No-RAG fails because training memory is generic and stale. Pgvector co-located with operational data couples concerns. A dedicated RAG host with ChromaDB lets us scale the knowledge layer independently and keep its blast radius contained. |

| 4 | MCP / Tools — Model Context Protocol, Cloudflare Zero Trust tunnel | Stage 3 / Auditability | Alternatives considered: ad-hoc REST, hardcoded tool descriptors, OpenAI function-calling format. MCP is an open protocol — the same tool server works across LLM vendors. Per-call permission scopes plus tunnel-only access give us the audit trail compliance buyers require. |

| 5 | Memory — structured per-client memory files + PostgreSQL session history | Stage 3 / Operational reliability + sovereignty | Alternatives considered: ephemeral chat; vendor built-in memory; shared memory across clients. Vendor memory is a lock-in trap and a sovereignty problem. Shared memory leaks across clients. Per-client memory in PostgreSQL is queryable, portable, and isolated by design. |

| 6 | Skills — packaged instruction sets loaded on trigger phrase | Stage 3 / Operational reliability | Alternatives considered: re-prompt for every task; fine-tune custom models; task-specific scripts. Re-prompting is inconsistent. Fine-tuning is expensive. Skills are declarative, versionable, testable, and load only when the trigger fires. |

| 7 | Agents — OpenClaw (self-hosted runtime) for conversational / judgment work; n8n AI Agent nodes for workflow-embedded judgment | Stage 3 / 4 / Sovereignty | Alternatives considered: cloud agent platforms (OpenAI Assistants, vendor agent UIs). Cloud platforms hold your conversation history and your client isolation in a multi-tenant database we don’t control. OpenClaw gives per-client agent isolation, paired-device input, and overnight pattern consolidation. |

| 8 | Workflows — n8n as the deterministic rail; pipelines for ingestion, scorecard, close, knowledge refresh, cash forecast, compliance monitor | All stages / Auditability | Alternatives considered: Zapier, Make, Power Automate, custom Python, Airflow. Hosted alternatives put your business logic and credentials in someone else’s perimeter. Self-hosted n8n gives us a queue we can rerun, integrations we don’t have to write, and a perimeter we control. |

Two corrections from v1.1

- Tier 3 said “pgvector”; reality is ChromaDB on a dedicated host. Fixed.

- Tier 7 said “Claude Code for development. n8n AI Agent nodes for operations.” OpenClaw is now the named runtime for conversational / judgment work, alongside n8n’s agent nodes for workflow-embedded judgment. Fixed.

An aside for the technical reader — the Apple-versus-Cray pattern

Cray built supercomputers that could do extraordinary things if you wrote the right code for them; Apple built systems where the structure made ordinary work reliable at scale. ProjectBits’ AI stack runs both modes by design — Sonnet at design time as the Cray-equivalent (translating a written policy into executable Rego, classifying transactions against a 51-criteria taxonomy, drafting case summaries from a transcript) and OPA at runtime as the Apple-equivalent (enforcing the resulting policy deterministically, every time, with an audit log). The methodology earns its keep by knowing which work belongs in which mode.

On revisiting these choices

Every entry in this table was an intentional decision at a point in time. We expect to revisit each one as the practice evolves. A model that’s right today may not be right next year. A protocol that’s the open standard today may have a successor. A workflow tool that’s adequate at our scale may not fit a 10× larger one. The discipline isn’t “we chose right and we’re done”; it’s “we chose intentionally, we work the choice, and we change it when reality demands a change.” This appendix gets revisited with every minor whitepaper revision.

Changelog from v1.1

| Change | Why |

|---|---|

| Lead narrative restructured from 8-tier architecture to four-stage data flow | Buyers don’t buy architecture; they buy trustworthy financial data flows. The tiers are proof, not pitch. |

| 8-Tier Stack moved to Technical Appendix | Engineering audience still gets the depth; prospect audience gets the value frame first. |

| Tier 3 corrected: pgvector → ChromaDB | v1.1 was architecturally aspirational; v1.2 names what’s actually running. |

| Tier 7 corrected: OpenClaw + n8n AI Agent nodes | The conversational / judgment runtime is OpenClaw; we’d shipped without naming it. |

| “Five Finance Fears” replaced with “Five Questions Owners Actually Ask” | Elevation framing over protection framing; matches the questions owners actually open with. |

| Industry-aware classifier surfaced as a primary feature, not a buried mechanic | This is the killer feature for the niche. v1.1 buried it. |

| §Receipts added | The methodology applied to the practice itself — pattern from the companion position paper, scaled for this audience. |

| Bridge to the CFO Operating System added | Stage 4 has a destination, and the destination has a name. |

| Mill metaphor extended — flour is intermediate; bread is the outcome | Flour is leverageable data, but it’s still raw material. The CFO Operating System bakes it into decisions. |

| Marketing absolutes softened across the document | “by design” over “never / always / only”; affirmative reframes that don’t raise the underlying concern. |

Where to start

The three-phase engagement arc — Assessment, Bookkeeping, CFO Operating System.

This whitepaper makes a case. The case has an obvious next step.

A real measurement of where your business actually stands on the Financial Maturity Staircase. The disciplines described above are scale-independent — the SMB-appropriate version is reachable inside a year of focused work for most firms — but the path is sequenced, and skipping rungs doesn’t work.

Take the Tax Ready Assessment

A no-obligation scored diagnostic across 51 criteria in 9 categories. Places your business on the Financial Maturity Staircase, surfaces the specific gaps that matter most, and produces a 90-day roadmap. Yours to keep whether or not you engage further.

Schedule 20 Minutes

A conversation with Don for firms where bookkeeping isn’t the obvious starting point. Same methodology, different entry point.

Tax Ready Bookkeeping™ + The AI Stack · Whitepaper v1.2 · ProjectBits Consulting · Don Lovett